- Autocad 2012 64 bit crack only

- Owl carousel 1-3-2 options

- Pro tools vs logic

- Steve vai passion and warfare lyrics

- Instalaci-n correcta de un calentador de agua titan

- Safe download ps4 emulator for android

- The labyrinth of grisaia g-e

- Gpu-z utility

- Fabfilter pro q 3 dynamic eq

- Adobe xd wireframe kit

- Is stellar data recovery safe

- Streaming mediaset premium calcio

- Hdri lighting vray 3ds max

- B734 led badge

- Build an excel student loan calculator payoff

- Rhema word of faith

- Outlook color code emails

- Jumpstart animal adventures karaoke youtube

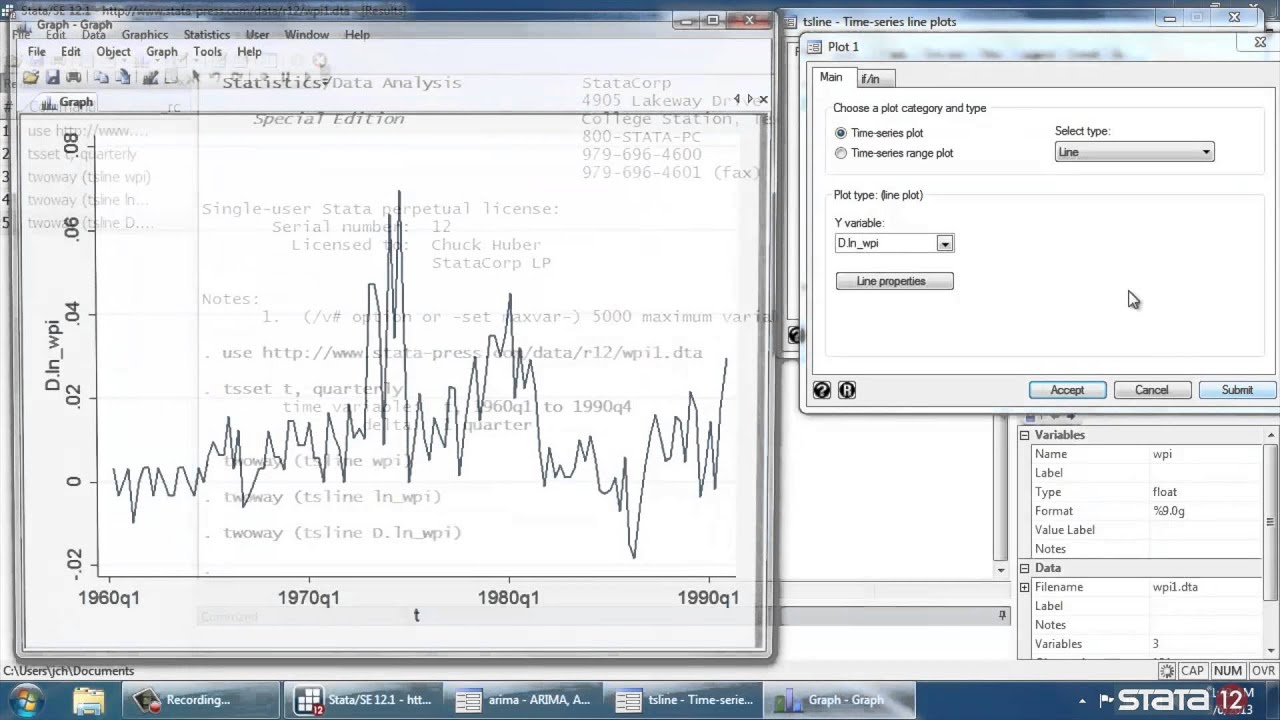

- Arma syntax stata 12

This is a somewhat stronger argument, but there's no description of what kind of study was done. The choice of m ≈ ln(T ) provides better power performance. Hoboken, NJ: John Wiley & Sons, Inc., 2005, here's what he wrote on p.33: The second setting for a lag is from Tsay, R. It's not a strong argument or suggestion by any means, yet people keep repeating it from one place to another. However, here's all they say about the lags on p.314: Englewood Cliffs, NJ: Prentice Hall, 1994.

Arma syntax stata 12 series#

Time Series Analysis: Forecasting and Control. The first one is supposed to be from the authorative book by Box, Jenkins, and Reinsel. The two most common settings are $\min(20,T-1)$ and $\ln T$ where $T$ is the length of the series, as you correctly noted. Any out-of-sample prediction exercises are also welcome here. Try to estimate the model including the MA and\or AR parts at the lag where the departure occurs AND additionally look at one of information criteria (either AIC or BIC depending on the sample size) this would bring you more insights on what model is more parsimonious. Disadvantages of far departures: more parameters to estimate, less degrees of freedom, worse predictive power of the model. So it will depend on how far from the present it happens.

Arma syntax stata 12 how to#

My question concerns how to interpret the test if $p<0.05$ for some values of $h$ and not for other values. Seeking to find a simple yet relevant model I suggest the information criteria as described below. It's a joint significance test, so if the choice of $h$ is data-driven, then why should I care about some small (occasional?) departures at any lag less than $h$, supposing that it is much less than $n$ of course (the power of the test you mentioned). Rather than using a single value for h, suppose that I do the Ljung-Box test for all h<50, and then pick the h which gives the minimum p value. Finally if you find some significant departures at latter lags try to think about the corrections (could this be due to some seasonal effects, or the data was not corrected for outliers).

Another one is the forecasting horizon, if you use the model for forecasting needs. Usually time series data has natural seasonal pattern, so the practical rule-of-thumb would be to set $h$ to twice this value. The common reason is: to be more or less confident about joint statistical significance of the null hypothesis of no autocorrelation up to lag $h$ (alternatively assuming that you have something close to a weak white noise) and to build a parsimonious model, having as little number of parameters as possible. What are actually trying to use the $Q$ test for?

Arma syntax stata 12 full#

(for the full set of conditions required for an asymptotically valid test, see Hayashi 2000, p. Here the right-hand-side variable is only "predetermined", and the Breusch-Godfrey test is then valid. This happens because the right-hand side variable (here the lag of the dependent variable) by design is not strictly exogenous to the error term, and we have found that such strict exogeneity is required for the BP/LB Q-statistic to have the postulated asymptotic distribution. Therefore we conclude, that in a pure time series model, the Box-Pierce Q and the Ljung-Box Q statistic cannot be said to have an asymptotic chi-square distribution, so the test loses its asymptotic justification. But the asymptotic distribution of the latter is standard Normal, which is the one leading to a chi-squared distribution when squaring the r.v. So $\sqrt n\hat \rho_j$ won't have the same asymptotic distribution as $\sqrt n\tilde \rho_j$.

But the second expected value is not, since the dependent variable depends on past errors. $$\tilde \rho_j \equiv \frac ]$ is zero by the assumptions of the standard AR(1) model. If we could observe the error term, then the sample autocorrelation of the error term is defined as Assume that we specify a simple AR(1) model, with all the usual properties,ĭenote the theoretical covariance of the error term as